Starving Genies

What 3X: Explore/Expand/Extract says about throttling

Since my genies seems to have all gone to rehab at the same time I have leisure (!?) to write this.

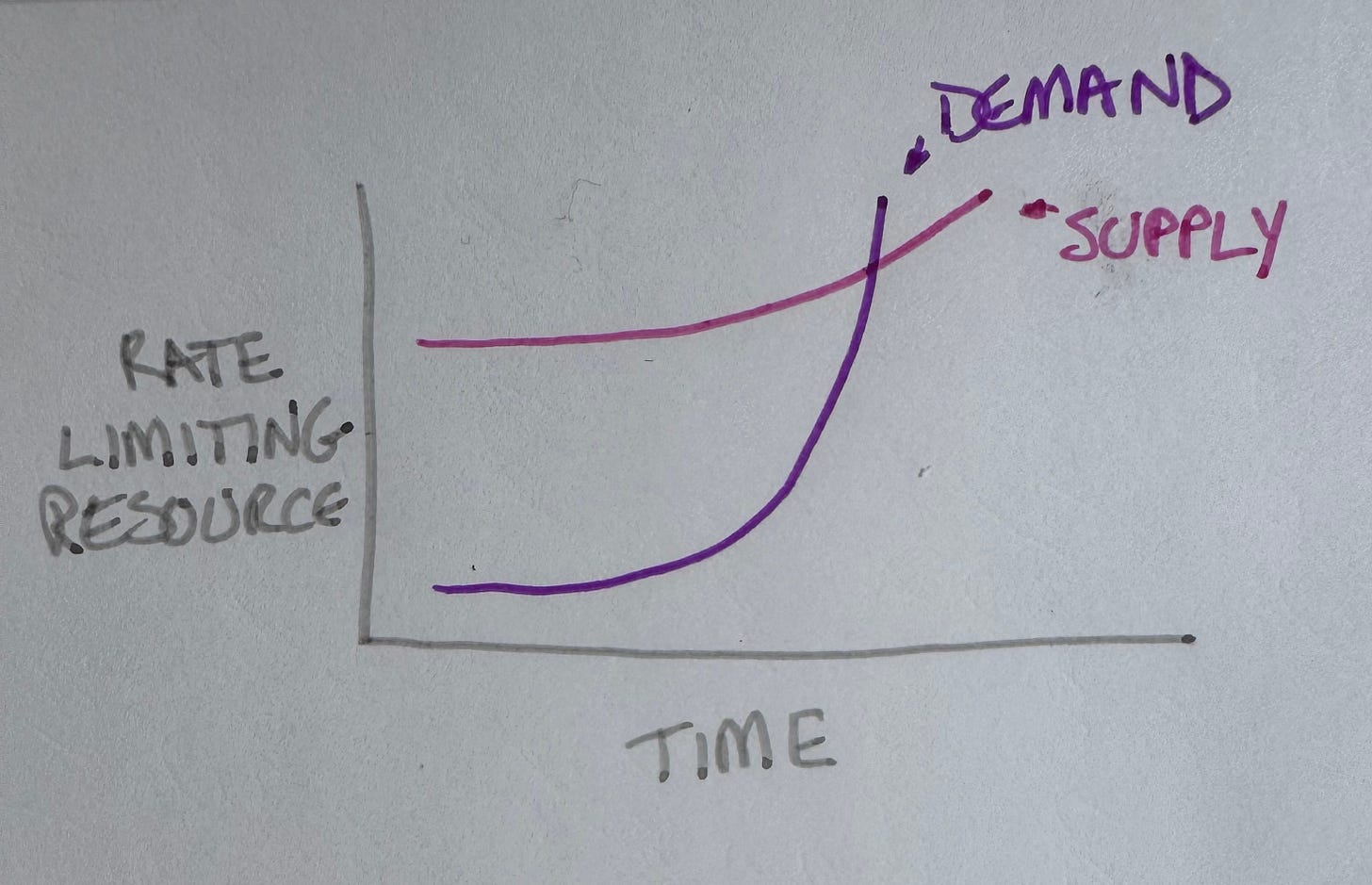

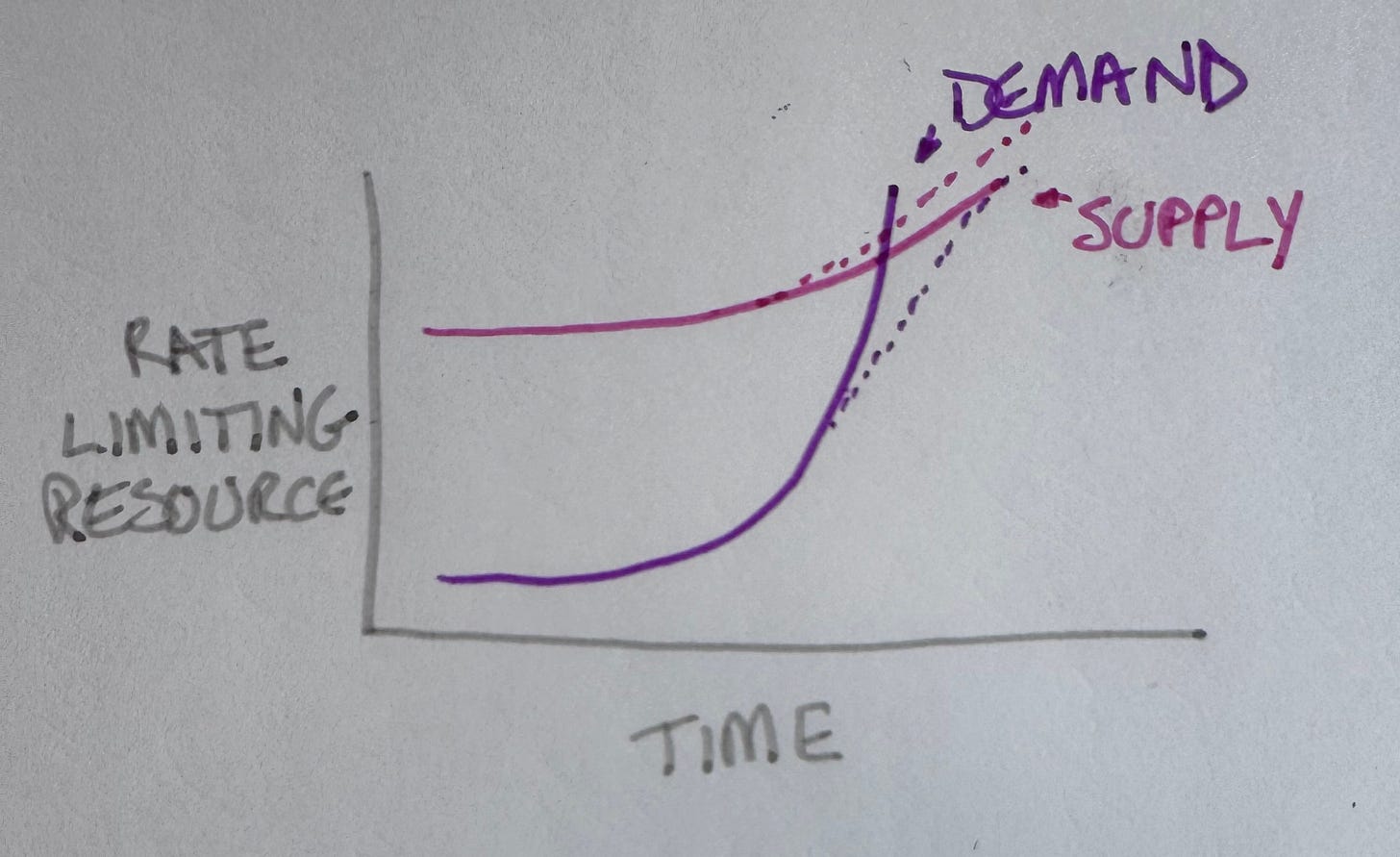

In the Expand phase, growth isn’t a curve. it’s a staircase. You grow until you approach a ceiling—some rate-limiting resource.

Either increase the supply of that resource or reduce the consumption until you get back to growth. Disaster averted!

Then the next rate-limiting resource looms and you repeat it. Then the next one. Then the next.

Eventually you know (from bumping into them) what the rate-limiting resources are and how to keep the supply curve above the demand curve. Then you can shift into Extract mode.

(Successful companies often retcon all these near-death Expand experiences to make their success seem inevitable.)

Genies

Everybody cuts limits at once. Not a coincidence, a signal.

The genie is in Expand. Hard. Usage is growing faster than almost any product in history.

When you’re about to hit the ceiling, you have two choices. To bend the supply curve up—build more data centers, get more chips, make inference cheaper. To bend the demand curve down—slow the growth, ration the usage, make free/cheap tiers less attractive.

The model providers are doing both, but bending demand down is the surprise move, at least to me. Who shuts the rocket motor off mid-air?

The twist here is competitive dynamics. Normally, bending demand down in the face of competition is suicide. Users leave. They go to the competitor. You lose. Expand doesn’t forgive.

The Bottleneck?

In Expand, the first question is always: what’s the next rate-limiting resource?

Chips? Nvidia supply is constrained, H100s are scarce, everyone’s fighting for allocation. Except Google makes their own. Amazon makes their own. Anthropic has a preferential supply agreement. And all three still cut limits at the same time. Not chips.

Raw compute capacity? Data centers, cooling, power delivery. Real constraints, multi-year buildouts. But physical constraints hit different companies at different times — different footprints, different geographies. You’d see variation. You see synchrony. Not physical capacity.

The economics of inference? The ratio of what it costs to serve a query to what users pay. Broken at scale, especially for free users. This one feels right until—they have basically unlimited capital. The compute bill is large but fundable. They’re not cutting limits because they literally can’t afford to serve you.

So what is it?

The story. Specifically, how long investors will fund giving away expensive capability while waiting for profits to catch up. That story has a shelf life. At some point you have to demonstrate a path to profitability, not just assert one. Usage limits are evidence you’re managing toward that—it’s a signal to investors, not a “we’re running out of money” decision.

That’s why all three moved together. The same investor class, the same stage, the same moment when “trust us, it’ll work out” stops being enough.

The bottleneck isn’t engineering. It’s narrative.

It’s a Race

What breaks the model cartel? Someone bends the supply curve up. Fixes the narrative. Inference gets cheaper through distillation, caching, smarter routing to smaller models. Custom silicon matures. New data center capacity comes online. One company gets meaningfully ahead on unit economics and can afford to open the throttle while competitors can’t.

That company wins the next wave.

What’s Next?

I write about augmented coding—developers working with genies all day. Usage limits bite differently for us. A power user hitting a daily cap mid-flow isn’t mildly inconvenienced. Their work stops. They have to, I don’t know, write another blog post.

For developers, caps pressure them toward the API—explicit pricing, higher ceilings, no daily cliff. For everyone else, it’s just a wall.

Limits split the user base: casual users get free but capped, developers get metered but uncapped, and the middle—technical-but-not-API-savvy power users—gets squeezed into paid consumer tiers. That’s the conversion pressure the companies actually want, even if a bit more revenue isn’t going to change the global constraint.

Uncomfortable question: are the limits temporary—a bridge while supply catches up—or are they the beginning of a new equilibrium where heavy AI usage is a premium product, not a default?

In 3X terms: is this a brief pause on the way to the next Expand staircase, or the beginning of Extract—where growth slows, margins matter, and rationing becomes a feature?

I don’t know. But I’m watching which company bends supply up first. That tells you everything about where Expand goes next.

My two cent's worth; everyone I know who has the skill and money is exploring the limits of locally running LLMs. At the same time the Chinese see an opportuning to diminish the competitive threat from the USA by releasing smaller. more powerful models. These can be run locally and come close to proprietary models 10-100x their size. We're also seeing self-improving models and agent teams working well. It's hard to see any of the proprietary model providers surviving, let alone all, and the investment in new data-centres will steadily decline. All this against the backdrop of a global energy crisis. fun times.

Beck's investor confidence argument is compelling, but I'd split the constraint in two. Investor signaling is real. Although the engineering part is also real - the throttling I saw last month wasn't uniform across use types, which suggests compute cost varies a lot by task. Long agentic sessions got cut harder than single completions. That looks like infrastructure constraint, not just signaling.

Both can be true at once. More interesting question: if limits are primarily narrative and lift when funding stabilizes, does demand catch up fast enough to immediately recreate the constraint? That cycle could run for a while before the infrastructure actually catches up.